|

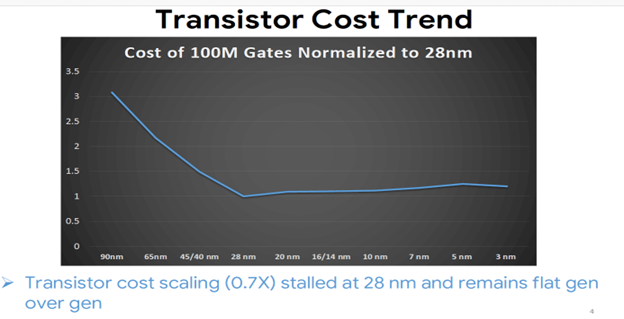

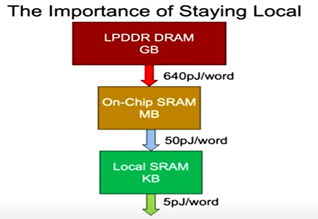

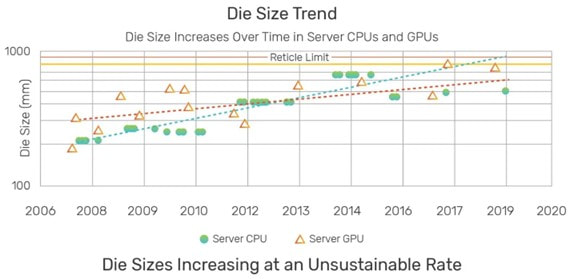

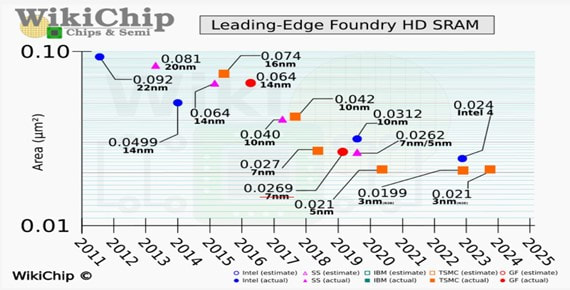

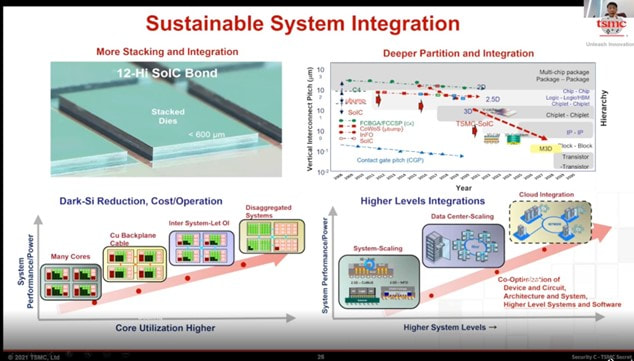

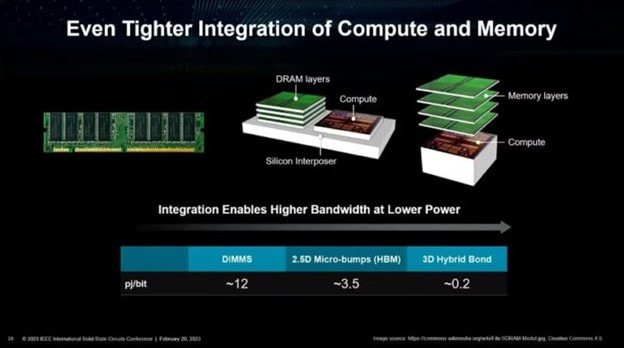

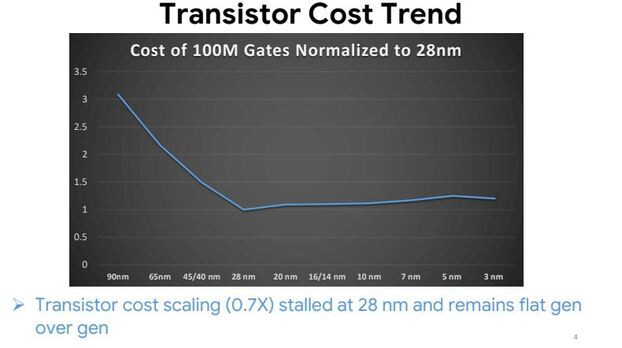

The Path Forward – 3D Integration with Hybrid Bonding In the dynamic field of semiconductor technology, the ongoing discourse surrounding Moore's Law has experienced a notable evolution, prominently featuring Zvi Or-Bach's (MonolithIC 3D’ CEO) 2014 assertion. His statement that transistor cost scaling reached a pivotal juncture at 28 nm has attracted significant attention. The statement was recently validated by Milind Shah from Google in the Short Course (SC1.6 ) at IEDM 2023. The unequivocal statement, "Transistor cost scaling (0.7X) stalled at 28 nm and remains flat gen over gen”, confirms what was initially foreseen in earlier public viewpoints and blogs in 2014 predicting the conclusion of Moore's Law. Source: Google, IEDM23 S.C1.6 Despite the stalling of cost scaling, why is the industry still pushing for ever-smaller transistors, aiming for a mind-boggling 1 nm node? The answer lies in system-level benefits. As illustrated by this chart from Bill Dally, Chief Scientist at NVIDIA Source: Bill Dally, Berkeley EECS, Nov 30, 2022. This, in turn, drives the trend of leading computing devices like CPUs and GPUs reaching reticle size and beyond. The pursuit of ever-smaller nodes allows for even tighter integration of components on the chip, further boosting performance and efficiency. Source: AMD Unfortunately, logic and memory (DRAM, NAND) fabrication processes is very different. Accordingly, they are produced on different wafers and cannot be integrated by scaling. And making it even worse SRAM bit-cell scaling had stopped at the 5 nm node. Source: WikiChip It seems that both AMD and TSMC understood these trends and during the last couple of years adapted Hybrid Bonding technology to enable future progress in computing performance. Source: TSMC Source: Dr. Lisa Su AMD ISSCC 2023 Tighter Integration Of Compute And Memor While current implementations like AMD's 3D V-Cache represent a stepping stone towards the full potential of 3D integration, significant hurdles remain. These include a fundamental shift in architectural thinking, moving from traditional edge interconnect to a novel 3D integration approach. Furthermore, achieving widespread adoption will require innovations in system-level redundancy, wafer-scale integration, and even on-chip RF networks. For a glimpse into this ambitious vision, look no further than Chapter 15* of the NANO-CHIPS 2030 book, aptly titled "A 1000× Improvement of the Processor-Memory Gap."(*Download the chapter here)

0 Comments

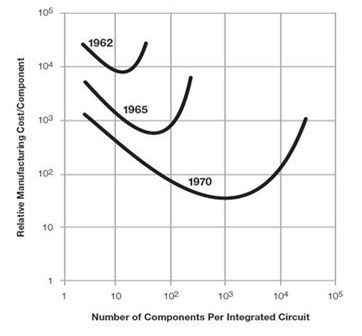

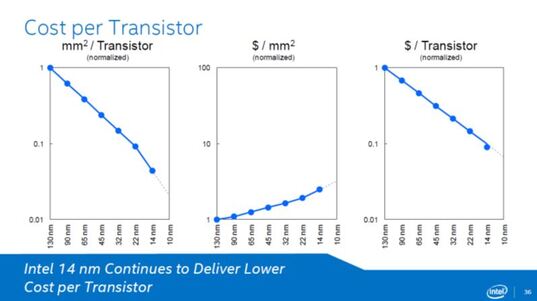

Yet at the right time - 2014, Zvi was the only one to clearly state "Moore’s Law has stopped at 28nm!" In the dynamic field of semiconductor technology, the ongoing discourse surrounding Moore's Law has experienced a notable evolution, prominently featuring Zvi Or-Bach's (MonolithIC 3D’ CEO) 2014 assertion. His statement that transistor cost scaling reached a pivotal juncture at 28 nm and remained static across four subsequent generations has attracted significant attention. The statement was recently validated by Milind Shah from Google in the Short Course (SC1.6) at IEDM 2023. The unequivocal statement, "Transistor cost scaling (0.7X) stalled at 28 nm and remains flat gen over gen'-4, confirming what was initially foreseen in earlier public viewpoints and blogs in 2014 predicting the conclusion of Moore's Law. A Historical Perspective on Moore's Law Moore's Law, as originally stated is "The complexity for minimum component costs has increased at a rate of roughly a factor of two per year (see graph on next page). Certainly over the short term this rate can be expected to continue” Accordingly it envisioned the cost optimum will be achieved by doubling of transistor density every two years. Reflections on the journey from 1 micron to 0.1 micron reveal numerous predictions about the 'End of Moore's Law,' not always proven accurate. It was in this dynamic context that Zvi Or Bach's unequivocal statement in 2014 stood out, declaring decisively, "Moore’s Law has stopped at 28nm!" That was while Intel kept declaring that Moore’s law will prevail for many years to come. Intel published it road map to keep cost per transistor reduction for the foreseeable future as indicated by their following slide. Zvi did reference Intel claims in his 2014 article in EE Times https://www.eetimes.com/intel-vs-intel/ Zvi Or Bach's 2016 Technical Assertion Building upon his 2014 insights, Zvi Or Bach delved deeper in 2016, presenting a technical analysis that underlined the stagnation of transistor cost scaling at 28 nm. His meticulous examination considered factors such as escalating lithography cost, and transistor complexity (HKMG, FinFET), resulting in escalating wafer costs, ultimately asserting that this node marked the conclusion of Moore's Law in terms of cost scaling. The latest technical discussions at IEDM 2023, facilitated by Google SC1.6's Short Course, have substantiated Zvi Or Bach's assertion from 2014. The technical validation affirming "Transistor cost scaling (0.7X) stalled at 28 nm and remains flat gen over gen " stands as a pivotal. This empirical confirmation not only solidifies the ongoing debate with tangible data but also fortifies the technical credibility of Zvi's initial forecast. Follow-up Publications and Referenced Insights

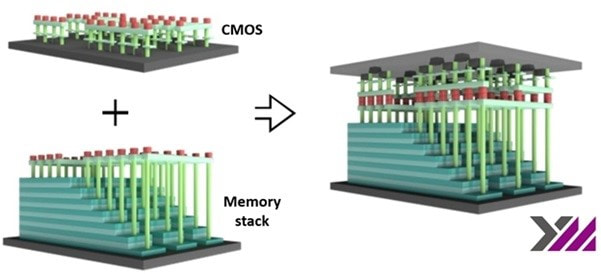

The impact of Zvi Or Bach's 2014 publications reverberated through the industry, as evidenced by a cascade of follow-up publications citing his work. Design and Reuse, PhoneArena, SmartBrief, EE Times Asia, and others recognized the significance of the technical insights, amplifying the discussion and contributing to the evolving narrative surrounding the conclusion of Moore's Law. 2024 is poised to be the inflection point for commercial use of hybrid bonding, is poised to become a central technology in all major semiconductor segments: logic, DRAM, and NAND. YMTC introduced the use of hybrid bonding for 3D NAND a few years ago, and now Kioxia/WD, one of the main 3D NAND vendors, is also releasing a 218 (BiCS8) 3D NAND product to volume production that utilizes hybrid bonding. This indicates the coming trend in NAND devices, termed "CMOS Directly Bonded to Array" (CBA).

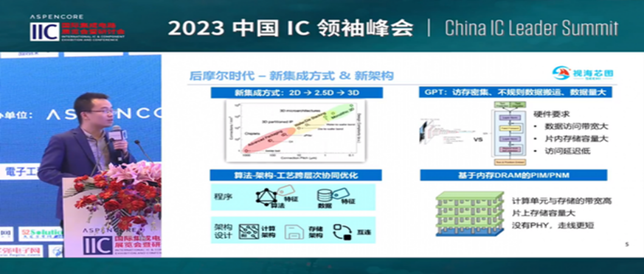

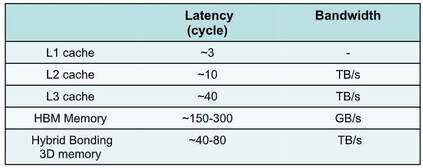

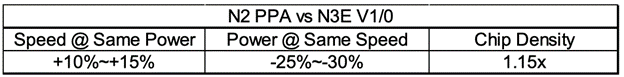

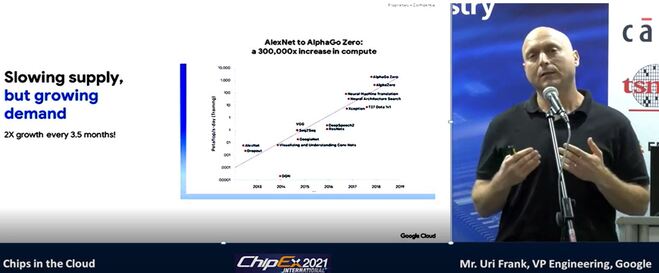

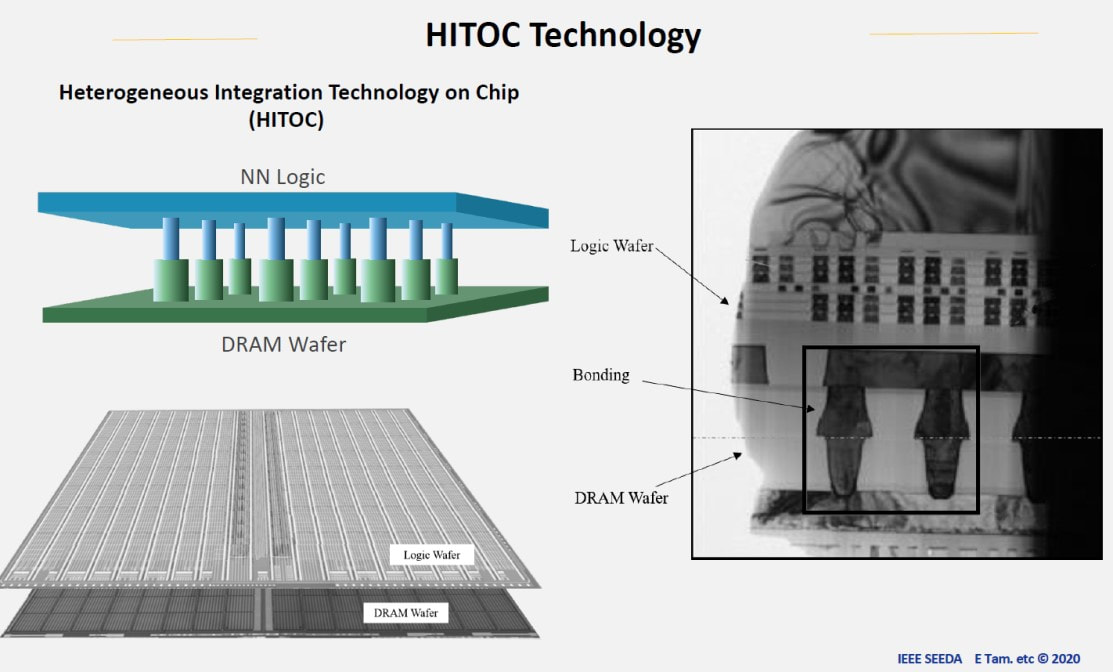

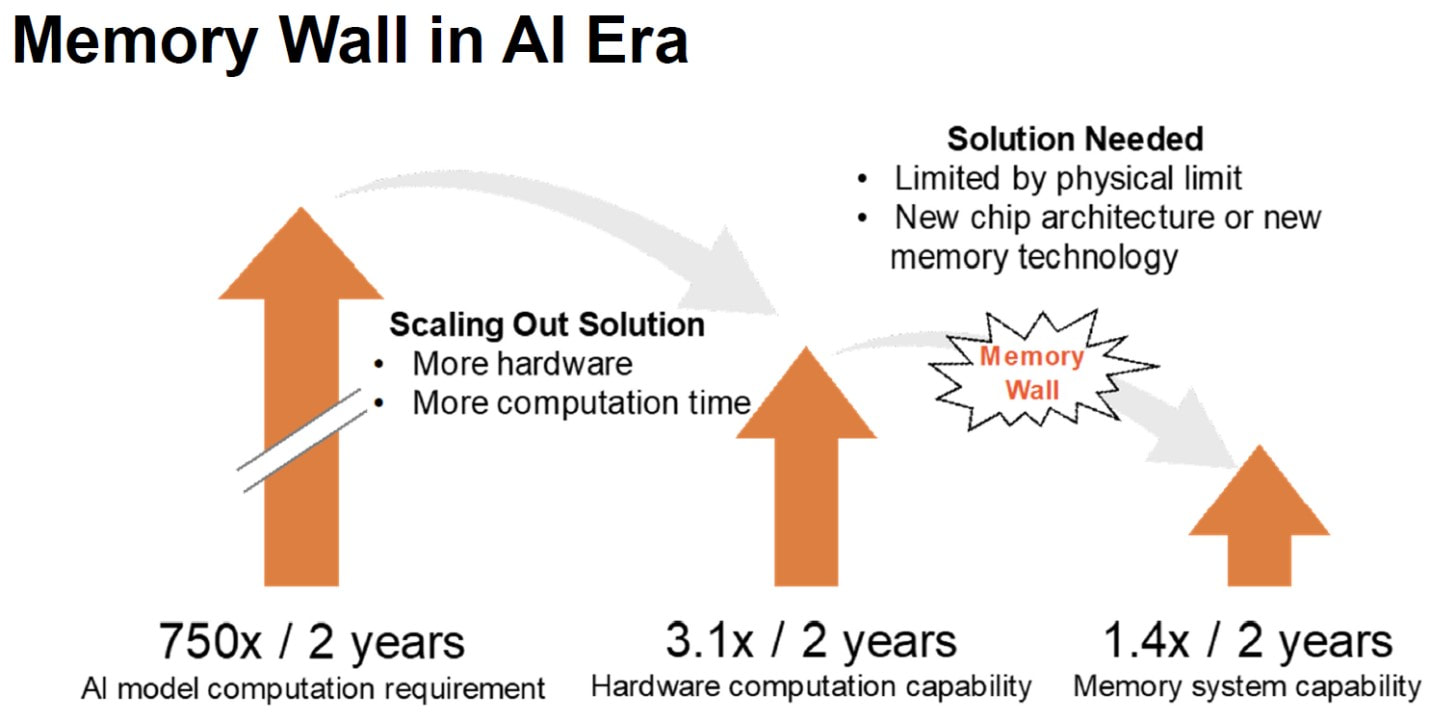

For DRAM, it has been announced that the high-bandwidth memory (HBM) product, HBM4, will be introduced in 2025 utilizing hybrid bonding. Additionally, all DRAM vendors are actively pursuing 3D DRAM development using hybrid bonding. Samsung has disclosed an alternative for DRAM using 4F2 Cell and Hybrid Bonding, which will be presented at this year's IEDM and may be introduced to the market soon. Early use of hybrid bonding in logic products took place in 2023 with AMD's 3D Cache technology. In 2024, Intel is scheduled to release its A20 process using Back Side Power Delivery (BSPD), also called PowerVia, which also uses bonding and extreme thinning technology. Extreme thinning overcomes one of the handicaps of 3D technologies, through-silicon vias (TSVs), using nano-TSVs, which are essentially simple via processes. As major foundries embrace extreme thinning, it is expected that many new applications of 3D heterogeneous integration using hybrid bonding will emerge soon after. AI computing could become the next-generation technology driver in 2024. As previously reported, China may win in AI computing by using hybrid bonding to enable DRAM over processor to bridge the "memory wall" through an an approach called near memory computing. While the current actors are not the first-tier vendors, this is expected to change due to the insatiable appetite for computing power by Large Language Model (LLM) vendors. According to OpenAI's calculations, the amount of computing used in global AI training has grown exponentially since 2012, doubling every 3.43 months on average. Currently, the amount of computing has expanded 300,000 times, far exceeding the growth rate of computing power. One indication of this emerging trend is the press release issued this week by UMC, titled "UMC Launches W2W 3D IC Project with Partners, Targeting Growth in Edge AI." Conclusion Hybrid bonding is becoming a key technology driving the next generation of semiconductor devices. 2024 is likely to be the pivotal point for hybrid bonding adoption across all major semiconductor segments, including logic, DRAM, and NAND. Additionally, hybrid bonding is expected to play a major role in the development of next-generation AI computing solutions. Leveraging Hybrid Bonding as an Alternative to Dimensional ScalingShortly after ISSCC 2022, we were intrigued by Alibaba's presentation of a paper titled "184QPS/W 64Mb/mm2 3D Logic-to-DRAM Hybrid Bonding with Process-Near-Memory Engine for Recommendation. System." The paper claimed a remarkable improvement of over 1,000 times in AI computing. Promptly, we published a blog about this breakthrough titled “China May Win in AI Computing”. However, we could not find any indication of Alibaba's revolutionary technology being adopted in the English electronics media until we stumbled upon Techinsights' advertisements for the Jasminer device teardown. Subsequently, we conducted a follow-up study of Chinese electronics media using Google Translate and uncovered numerous other activities by Chinese semiconductor vendors. It is well-known that the US restrictions on state-of-the-art semiconductor equipment and computing chips have significantly impacted China's competitive position in the field of AI. Nevertheless, it seems that certain Chinese companies are now exploring alternative approaches, such as Hybrid Bonding, as evidenced by the coverage below. Let's start with a bit of a background. In a paper presented at the 2017 IEEE S3S conference, titled "A 1,000x Improvement in Computer Systems by Bridging the Processor-Memory Gap," we introduced the potential of using hybrid bonding to overcome the "Memory Wall." We further elaborated on this concept in a book chapter, titled "A 1000× Improvement of the Processor-Memory Gap," published in the book NANO-CHIPS 2030,published in 2020. On June 15, 2022, a Chinese article titled "Breakthrough Bypassing the EUV Lithography Machine to Realize the Independent Development of DRAM Chips" reported that Xinmeng Technology had announced the development of a 3D 4F² DRAM architecture based on its HITOC technology. The company claimed that this architecture could be used as an alternative to advanced commercial DRAM. According to the article, this development represents another major innovation breakthrough in the field of heterogeneous integration technology, following the release of SUNRISE, an all-in-one AI chip for storage and computing. The article also reported that Haowei Technology had successfully used Xinmeng's HITOC technology for the latest tape-out of the Cuckoo 2 chip, resulting in a large-capacity storage-computing integrated 3D architecture. Techinsights is currently promoting a report titled "China breaks through restrictions with advanced chiplet strategy: 3D-IC Breakthrough in Chinese Ethereum Miner." According to the report, Techinsights discovered a storage mining design in the Jasminer X4 Ethereum miner ASIC, which was developed by the China-based manufacturer Jasminer. The report states that this design is the industry's first-ever use of DBI hybrid bonding technology with DRAM. Techinsights highlights that the Jasminer X4 demonstrates how a Chinese company can combine mature technologies to creatively produce high-performance, cutting-edge applications even under trade restrictions. The report also reveals that the X4 features a sizable 32 mm × 21 mm logic die that employs the earlier XMC (Wuhan Xinxin Semiconductor Manufacturing Co.) planar 40/45 nm CMOS. During the 2023 China IC Leaders Summit, Seehi founder Xu Dawen gave a speech entitled "DRAM Storage and Computing Chips: Leading the Revolution of AI Large-Scale Computing Power." The company has published the speech on its website, and we have selected some of the key statements from it. “From the trend of AI development and restricted hardware, it can be seen that the scale of AI models increase by more than one order of magnitude every 2-3 years, and the peak computing power of chips increases by an average of 3 times every two years, lagging behind the development of AI models . However, memory performance lags behind even more, with an average increase of 80% in memory capacity every two years, a 40% increase in bandwidth, and almost no change in latency.” “At present, it is known that a single model requires more than 2,600 servers, which costs about 340 million U.S. dollars when converted into funds, and consumes about 410,000 kWh of electricity per day.” “Different from the model with CNN as the main framework, GPT is characterized by intensive memory access and irregular data handling, and insufficient data multiplexing.” In his talk Xu referenced the work published by Alibaba at ISSCC2022 – “In 2022, Bodhidharma Academy and Tsinghua Unigroup stacked 25nm DRAM on 55nm logic chips to build neural network computing and matching acceleration in recommendation systems. The system bandwidth reaches 1.38TBps. Compared with the CPU version, the performance is 9 times faster, and the energy efficiency ratio exceeds 300 times.” During his speech, Xu Dawen presented a table that compared different alternatives to memory cache. Table 1: Memory latency and bandwidth comparison “Through 3D stacking technology, we can achieve the distance between the processor and DRAM to the micron level or even sub-micron level. In this case, the wiring is very short and the delay is relatively small. Through this technology, thousands or even hundreds of thousands of interconnection lines can be completed per square millimeter, achieving higher bandwidth. In addition, eliminating the PHY and shorter wiring will result in lower power consumption and better cost performance. The entire chip is composed of multiple tiles, and each tile is stacked by DRAM and logic. The DRAM part is mainly to provide high storage capacity and high transmission bandwidth, and the logic part is mainly to do high computing power and efficient interconnection. The NoC communicates between the tiles. This NoC is an in/through NoC design. It is interconnected with adjacent tiles on the same plane and communicates with the memory in the vertical direction.” Finally he referenced their device called SH9000 GPT “which is based on the customer's algorithm and is integrated around the architecture layer, circuit layer, and transistor layer across layers. This set of technologies has successfully achieved excellent cost and energy efficiency ratios on mining machine chips. The theoretical peak power consumption of the SH9000 chip design may be slightly lower than that of the A100 (Nvidia), and the actual RTOPS is expected to be twice that of the opponent, which can achieve a better energy efficiency ratio.” Considering that both logic and DRAM devices were manufactured in China using relatively "old" nodes and still perform competitively compared to TSMC’ state-of-the-art devices and systems, it should be a matter of concern for all of us. This is particularly true since improvements solely through node scaling are no longer as significant as they used to be. The following table, released by TSMC for their 2025 N2 node, illustrates this point. Table 2: N2 PPA comparison. Undoubtedly, the US restrictions on state-of-the-art semiconductor equipment and computing chips have greatly impacted China's competitive position in the field of AI. However, it appears that some Chinese companies are now exploring alternative approaches, such as Hybrid Bonding and heterogeneous system integration. While this new path presents its own challenges, it offers unprecedented advantages. Delaying the adoption of this new approach could result in an insurmountable gap in the future. The following chart from Applied Materials illustrates this point clearly. Helps understand the <1,000x AI computing achievement of Alibaba. " Bonding stacked dies into a single package instead of a multiple package on a PCB increases I/O density by 100x.The energy-per-bit transfer can be reduced to 30x with the latest technology."

Demonstrating:

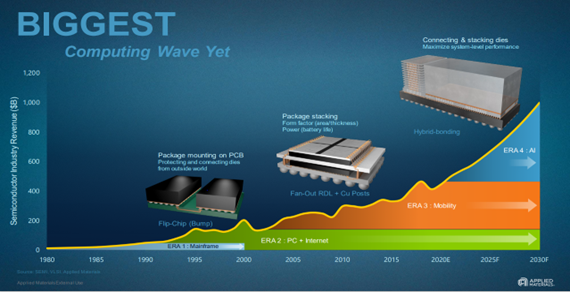

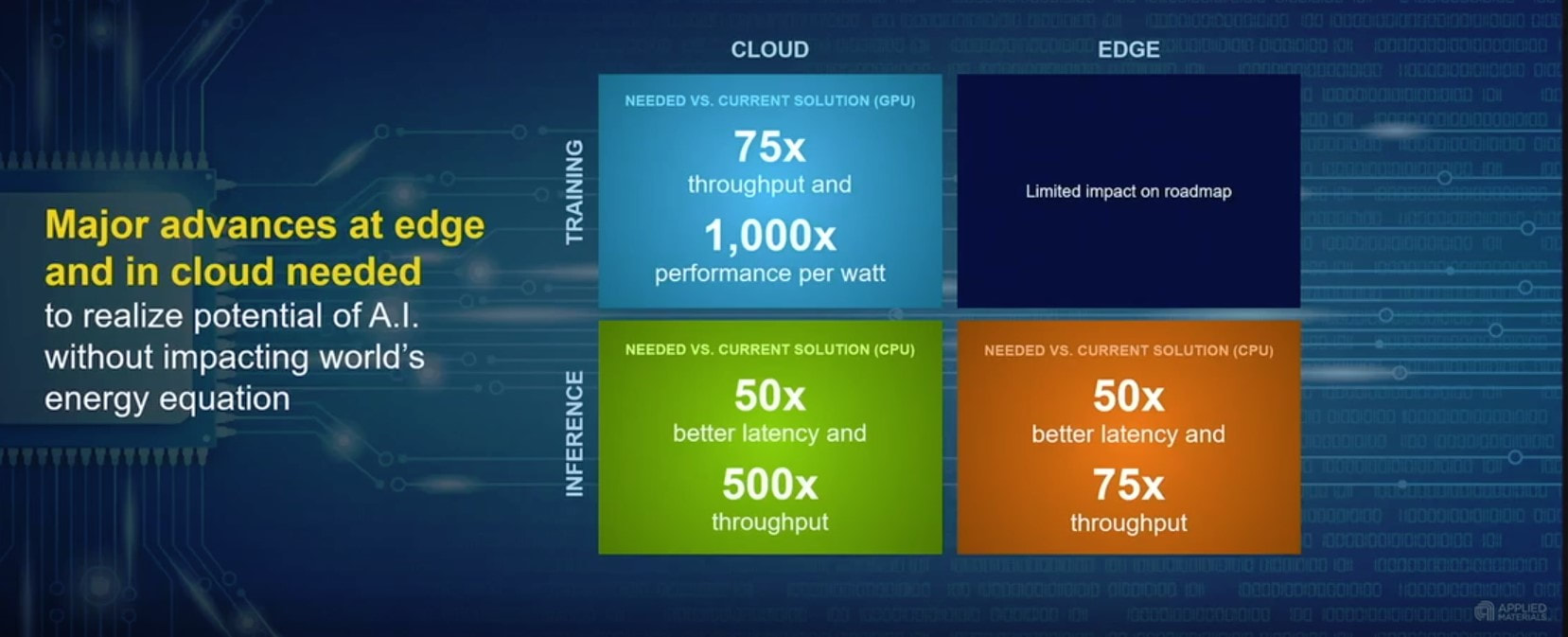

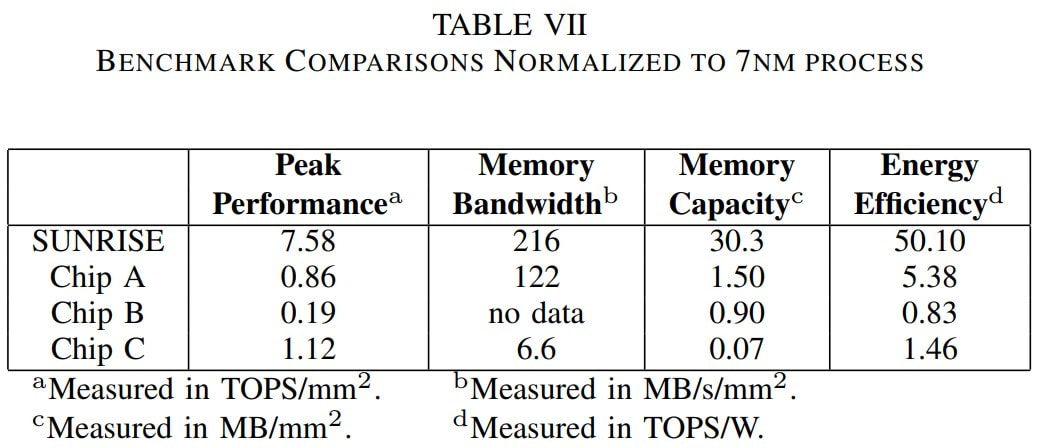

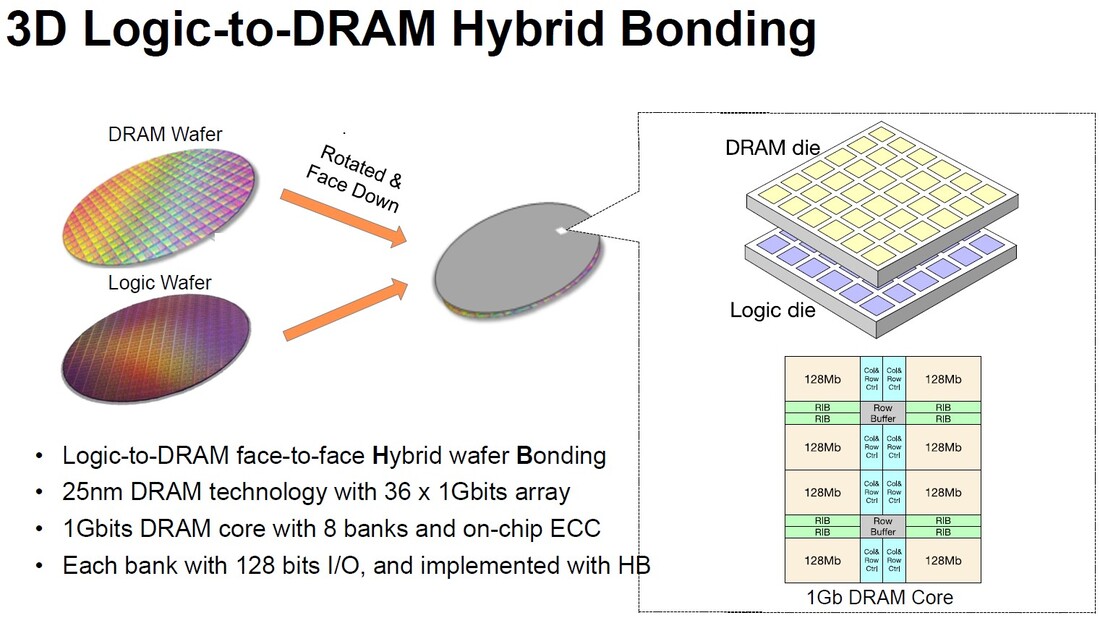

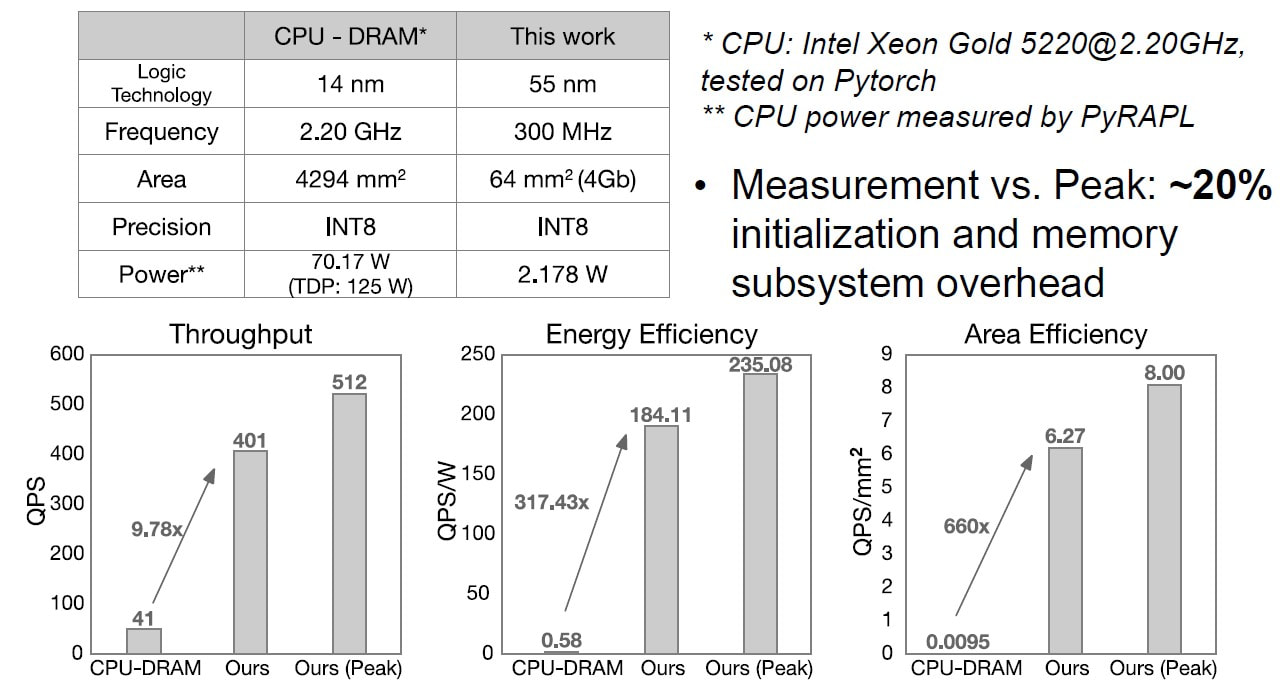

Leveraging Hybrid Bonding as an Alternative to Dimensional Scaling The technology driver for the next decade is AI. Quoting Applied Materials CEO Gary Dickerson “Are we ready for the biggest opportunity of our lifetime?” - “Gary’s been traveling the world talking to chipmakers and policymakers about a $10 trillion question: how do we capture the economic opportunity of AI, which will transform nearly every industry and institution over the coming years?” Gary presented the chart below presenting the 1,000x challenge to the semiconductors industry. Fig. 1: The required improvement in power-performance, to support the demand of AI computing. In fact the AI challenge is a moving target, as computing demands are growing by 2X about every 3.5 months. Fig. 2 Uri Frank, Google VP Engineering at ChipEx 2021. In recent years, there has been a buildup of tension in the US-China relation resulting in the US blocking China from securing access to advanced semiconductor technology and equipment. This includes access to advanced lithography tools such EUV. Accordingly, it was reported that only TSMC, Samsung and Intel have stayed in the race at technology node scaling below 10nm. Hence, it makes sense for Chinese firms to focus alternative resources on mature chip tech, say analysts. This could explain the adoption of hybrid bonding as a core technology by multiple Chinese corporations. Hybrid bonding allows them to replace dimensional node scaling with system-level 3D scaling. In August 2018, YMTC officially launched its ground-breaking Xtacking® architecture at the Flash Memory Summit, and won the “Best of Show” award. For its 3D NAND product, it uses two semiconductor manufacturing lines, one for the 3D NAND multi-level memory, and one for the peripheral (memory control) circuits as illustrated in Fig. 3 below. Fig. 3 Xtacking – using hybrid bonding to stack periphery on top of the 3D NAND memory fabric In September 2020 another Chinese company, IC League, published the results of their Heterogeneous Integration Technology on Chip (HITOC) Technology, an AI oriented IC development, in a paper titled Breaking the Memory Wall for AI Chip with a New Dimension . Fig. 4 IC League’s Heterogeneous Integration on Chip (HITOC) Technology Quoting from the paper: “With HITOC, we have two wafers, logic wafer, and memory wafer, bonded (using Hybrid Bonding) together [Fig. 4]. On the logic wafer, we have pools of processing units. Underneath the logic pool on the other wafer are pools of DRAM arrays.” The results reported by IC League were of better than orders of magnitude of overall improvement as could be seen in the table below. Fig. 5 HITOC technology the Sunrise device vs. conventional 2D alternative devices Last week at ISSCC 2022, Alibaba presented a more than 1,000 x improvement for AI computing devices using Hybrid Bonding in a paper titled “184QPS/W 64Mb/mm2 3D Logic-to-DRAM Hybrid Bonding with Process-Near- Memory Engine for Recommendation System.” The paper rightly points out that for AI computing data transfer dominates the system performance and power consumption. Consequently, overcoming the “Memory Wall” is key for AI computing and even more so with the rapid escalation of the size of the AI model computation requirements as illustrated in Fig. 6 below. Fig. 6 Escalation of AI module size compared to logic and memory annual technology improvements. The paper details the device architecture which leverages Hybrid Bonding to connect from the multi-bank DRAM directly to the AI processors’ logic. A die size of DRAM in commodity market is fairly tiny such as smaller than 50 mm2 in part due to higher yield and constraint of JEDEC standard. Interestingly, Alibaba’s logic-to-DRAM 3D chip is truly large chip; 602.22 mm2. By doing so, an important aspect of this work was to architect the logic and the corresponding DRAM as a full system design with multiple DRAM banks directly connecting to the multi cores logic underneath. Then, we can even extend this 3D Logic-to-DRAM concept in full wafer scale chip like Cerebra’s Wafer-Scale-Engine (CS-2). Unfortunately, Cerebras wafer-scale-engine is currently using only SRAM. Imagine what if a fully DRAM wafer would be directly hybrid bonded on Cerebra’s wafer scale engine. The company disclosed that their CS-2 has 40 gigabytes of on-chip SRAM. At the same size, DRAM can easily offer easily greater than 1 terabyte or at least 25 times greater capacity. Now, we are a step closer to breaking memory wall. Fig. 7 Illustration of system integration flow and the high level architecture Alibaba’s paper title suggests that the work targets the AI segment of Recommendation Systems, in which Alibaba has a high interest and has been developing systems including publishing work since at least 2017. This paper presents a very important breakthrough in performance and power reduction. Quoting from the paper: “"Compared to the CPU-DRAM system, our chip achieves 9.78× speedup. Note that the throughput and memory capacity can be further improved by scaling up the number of hybrid bonding blocks or using more advanced process technologies to serve more complicated recommendation models. In terms of energy efficiency, which is significant in memory-bound applications, our work achieves 184.11QPS/W (QPS – Queries per Second), which outperforms the CPU-DRAM system by 317.43×. In terms of area efficiency, the high-density hybrid bonding improves QPS/mm2 by 660×." The results were achieved while using a relatively old process node of 55 nm for the logic and were compared with the top of the line Intel Xeon Gold CPU processed at 14 nm. Fig. 8 Hybrid Bonding performance and energy improvements presented at ISSCC 2022. These results are multiple orders of magnitude better than the result reported by AMD for their V-Cache using hybrid bonding for added cache memory to their Ryzen CPU. There could be few reasons for the difference including the effort in re-architecting the system to highly leverage the Hybrid Bonding technology. The Alibaba chip was certainly architected from the ground up anticipating hybrid bonding, where the AMD combination may have been an afterthought. Further it should be noted that while AMD reported utilizing a vertical connectivity pitch of 9µm, the Chinese vendors are reporting vertical pitch of 3µ and in some cases even 1µ. The Sputnik Surprise – On February 7, 1958, the US established DARPA “to keep that technological superiority in the hands of the United State”, this is even more important now as we consider the potential power of AI technologies for the coming years.

"Dr. Zvi Or-Bach presented a talk for the SSCS Romania Chapter on March 24th, 2021." "A vision for: New Types of Programmable Fabric 3d FPGA" Below is presented a chapter extract from the book NANO-CHIPS 2030.

"In this book, a global team of experts from academia, research institutes and industry presents their vision on how new nano-chip architectures will enable the performance and energy efficiency needed for AI-driven advancements in autonomous mobility, healthcare, and man-machine cooperation." (Springer Link)

Below are presented sections from chapters written by us, from the book. To get the full chapters, access the following link NANO-CHIPS 2030 or alternatives such as Amazon.

"The Continuing Evolution of Moore's Law" blog written by Dr. Michael Mayberry, the chief technology officer of Intel Corporation, was posted on August 2nd on EE Times website. He wrote that in the future the logic will move more toward 3D.

"We moved to 3D starting with Trigate (FinFET) at 22 nm node, but an even better example is our announcement in May of a 96-layer, 4-bit-per-cell NAND flash that packs up to 1 terabit of information per die. This is a true post-Dennard example of packing increasing functions into a die without feature scaling. Over time, we expect logic to also move more toward 3D." [read full article] |

Search Blog

Meet the BloggersFollow usRecommended LinksRecommended Blogs

Archives

January 2024

Categories

All

|

RSS Feed

RSS Feed